(http://www.digimoon.net/)

※ 참고문서

http://behindtheracks.com/2014/11/openstack-juno-scripted-install-with-neutron-on-centos-7/

▲ 이 좋은 정보를 알려주신 블로그 주인장님과 팀장님 대박 감사합니다. ^^

http://docs.openstack.org/juno/install-guide/install/yum/content/

▲ 개인 또는 기업이 작성한 오픈스택 기술문서들이 인터넷에 난무하여 정보의 파편화(?)가 너무 심한데, 아키텍처 구성도와 용어에 대한 설명은 오픈스택 공식 홈페이지의 원본을 충실히 따르고자 노력하였다. 용어를 정확하게 정의하고 통일하는 이런 사소한(?) 노력이 '표준화'를 위한 기본이 아닐까...

목차

1. 사전 준비사항

2. Controller 노드 설치

3. Network 노드 설치

4. Compute 노드 설치

5. 초기 네트워크 생성

1. 사전 준비사항

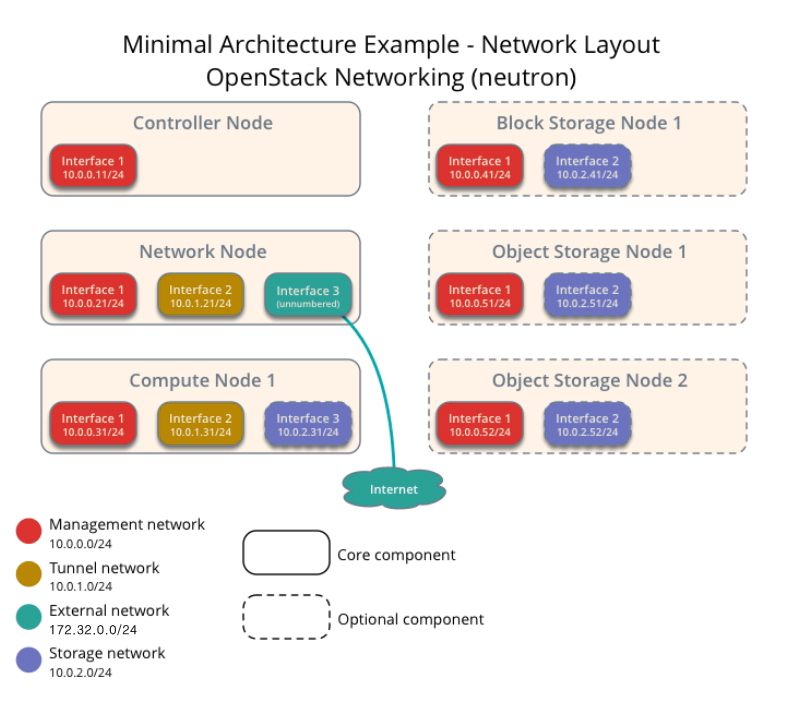

OpenStack Juno 공식 기술문서의 3 node Architecture(with Neutron)를 따르는 구성을 설명한다.

Controller Node, Network Node, Compute Node 노드는 3 node 아키텍처를 구성하기 위한 필수 노드들이며 Block Storage Node와 Object Storage Node는 선택사항이다.

▲ 이미지 출처 : 오픈스택 공식 홈페이지

http://docs.openstack.org/juno/install-guide/install/yum/content/ch_basic_environment.html#basics-packages

① 각 노드에 운영체제로는 CentOS 7을 설치, 네트워크를 올바르게 설정한다. Block Storage Node와 Object Storage Node없이 나머지 3가지 Node만으로 구성한다면 Storage network는 필요없다.

② Management network(10.0.0.0/24)를 통해 각 노드 간 hostname resolving이 가능하도록 한다(/etc/hosts 또는 DNS 세팅).

③ 각 노드를 ntp 설정을 통해 시간을 동기화한다.

④ Block Storage node는 가상머신이 설치되어 저장되는 block storage 공간을 가진 노드이다. Block Storage Node를 준비하지 못한 관계로 본 문서에서는 Compute Node에 로컬 블록 디바이스를 직접 장착하여 OpenStack을 구성하는 방법을 설명한다. 100G 용량을 갖는 /dev/sdb를 Compute Node에 추가하였다.

# pvcreate /dev/sdb

# vgcreate cinder-volumes /dev/sdb

⑤ OpenStack command-line client(keystone, glance 등)를 위해 요구되는 환경변수를 세팅하기 위해 OpenStack rc 파일이라 하는 환경 파일을 세팅해야 한다. 본 문서에서는 OpenStack rc 파일의 이름으로 admin-openrc.sh를 사용하며, 설치 스크립트에 rc 파일을 생성하는 코드가 포함되어 있으므로 별도로 사전 준비할 필요는 없다.

OpenStack rc 파일은 대개 아래와 같은 환경변수를 포함한다.

export OS_TENANT_NAME=admin export OS_USERNAME=admin export OS_PASSWORD=$ADMIN_PWD export OS_AUTH_URL=http://$CONTROLLER_IP:35357/v2.0

2. Controller 노드 설치

① 아래 내용의 스크립트를 실행

install_controller.sh

혹시 아래의 스크립트 코드를 복사 & 붙여넣기하여 만든 스크립트를 실행 중 "here-document delimited by end-of-file" 에러 난다면 EOF 구문 실행 시에 나는 에러이다. EOF의 시작행과 끝행 사이의 모든 행에 tab 공백이 들어가서 발생하는 문제이다. tab 공백을 모두 지우면 해결된다.

http://stackoverflow.com/questions/18660798/here-document-delimited-by-end-of-file

#!/bin/bash

#get the configuration info

CONTROLLER_IP='10.0.0.11'

ADMIN_TOKEN=$(openssl rand -hex 10)

SERVICE_PWD='P@ssworD'

ADMIN_PWD='P@ssworD'

META_PWD='P@ssworD'

THISHOST_IP='10.0.0.11'

#openstack repos

yum -y install yum-plugin-priorities

yum -y install https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm

yum -y install http://rdo.fedorapeople.org/openstack-juno/rdo-release-juno.rpm

yum -y upgrade

yum -y install openstack-selinux

#loosen things up

systemctl stop firewalld.service

systemctl disable firewalld.service

sed -i 's/enforcing/disabled/g' /etc/selinux/config

echo 0 > /sys/fs/selinux/enforce

#install database server

yum -y install mariadb mariadb-server MySQL-python

#edit /etc/my.cnf

sed -i.bak "10i\\

bind-address = $CONTROLLER_IP\n\

default-storage-engine = innodb\n\

innodb_file_per_table\n\

collation-server = utf8_general_ci\n\

init-connect = 'SET NAMES utf8'\n\

character-set-server = utf8\n\

" /etc/my.cnf

#start database server

systemctl enable mariadb.service

systemctl start mariadb.service

echo 'now run through the mysql_secure_installation'

mysql_secure_installation

#create databases

echo 'Enter the new MySQL root password'

mysql -u root -p <<EOF

CREATE DATABASE nova;

CREATE DATABASE cinder;

CREATE DATABASE glance;

CREATE DATABASE keystone;

CREATE DATABASE neutron;

GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON cinder.* TO 'cinder'@'localhost' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON cinder.* TO 'cinder'@'%' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY '$SERVICE_PWD';

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY '$SERVICE_PWD';

FLUSH PRIVILEGES;

EOF

#install messaging service

yum -y install rabbitmq-server

systemctl enable rabbitmq-server.service

systemctl start rabbitmq-server.service

#install keystone

yum -y install openstack-keystone python-keystoneclient

#edit /etc/keystone.conf

sed -i.bak "s/#admin_token=ADMIN/admin_token=$ADMIN_TOKEN/g" /etc/keystone/keystone.conf

sed -i "/\[database\]/a \

connection = mysql://keystone:$SERVICE_PWD@$CONTROLLER_IP/keystone" /etc/keystone/keystone.conf

sed -i "/\[token\]/a \

provider = keystone.token.providers.uuid.Provider\n\

driver = keystone.token.persistence.backends.sql.Token\n" /etc/keystone/keystone.conf

#finish keystone setup

keystone-manage pki_setup --keystone-user keystone --keystone-group keystone

chown -R keystone:keystone /var/log/keystone

chown -R keystone:keystone /etc/keystone/ssl

chmod -R o-rwx /etc/keystone/ssl

su -s /bin/sh -c "keystone-manage db_sync" keystone

#start keystone

systemctl enable openstack-keystone.service

systemctl start openstack-keystone.service

#schedule token purge

(crontab -l -u keystone 2>&1 | grep -q token_flush) || \

echo '@hourly /usr/bin/keystone-manage token_flush >/var/log/keystone/keystone-tokenflush.log 2>&1' \

>> /var/spool/cron/keystone

#create users and tenants

export OS_SERVICE_TOKEN=$ADMIN_TOKEN

export OS_SERVICE_ENDPOINT=http://$CONTROLLER_IP:35357/v2.0

keystone tenant-create --name admin --description "Admin Tenant"

keystone user-create --name admin --pass $ADMIN_PWD

keystone role-create --name admin

keystone user-role-add --tenant admin --user admin --role admin

keystone role-create --name _member_

keystone user-role-add --tenant admin --user admin --role _member_

keystone tenant-create --name demo --description "Demo Tenant"

keystone user-create --name demo --pass password

keystone user-role-add --tenant demo --user demo --role _member_

keystone tenant-create --name service --description "Service Tenant"

keystone service-create --name keystone --type identity \

--description "OpenStack Identity"

keystone endpoint-create \

--service-id $(keystone service-list | awk '/ identity / {print $2}') \

--publicurl http://$CONTROLLER_IP:5000/v2.0 \

--internalurl http://$CONTROLLER_IP:5000/v2.0 \

--adminurl http://$CONTROLLER_IP:35357/v2.0 \

--region regionOne

unset OS_SERVICE_TOKEN OS_SERVICE_ENDPOINT

echo "export OS_TENANT_NAME=admin" > admin-openrc.sh

echo "export OS_USERNAME=admin" >> admin-openrc.sh

echo "export OS_PASSWORD=$ADMIN_PWD" >> admin-openrc.sh

echo "export OS_AUTH_URL=http://$CONTROLLER_IP:35357/v2.0" >> admin-openrc.sh

source admin-openrc.sh

#create keystone entries for glance

keystone user-create --name glance --pass $SERVICE_PWD

keystone user-role-add --user glance --tenant service --role admin

keystone service-create --name glance --type image \

--description "OpenStack Image Service"

keystone endpoint-create \

--service-id $(keystone service-list | awk '/ image / {print $2}') \

--publicurl http://$CONTROLLER_IP:9292 \

--internalurl http://$CONTROLLER_IP:9292 \

--adminurl http://$CONTROLLER_IP:9292 \

--region regionOne

#install glance

yum -y install openstack-glance python-glanceclient

#edit /etc/glance/glance-api.conf

sed -i.bak "/\[database\]/a \

connection = mysql://glance:$SERVICE_PWD@$CONTROLLER_IP/glance" /etc/glance/glance-api.conf

sed -i "/\[keystone_authtoken\]/a \

auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\

identity_uri = http://$CONTROLLER_IP:35357\n\

admin_tenant_name = service\n\

admin_user = glance\n\

admin_password = $SERVICE_PWD" /etc/glance/glance-api.conf

sed -i "/\[paste_deploy\]/a \

flavor = keystone" /etc/glance/glance-api.conf

sed -i "/\[glance_store\]/a \

default_store = file\n\

filesystem_store_datadir = /var/lib/glance/images/" /etc/glance/glance-api.conf

#edit /etc/glance/glance-registry.conf

sed -i.bak "/\[database\]/a \

connection = mysql://glance:$SERVICE_PWD@$CONTROLLER_IP/glance" /etc/glance/glance-registry.conf

sed -i "/\[keystone_authtoken\]/a \

auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\

identity_uri = http://$CONTROLLER_IP:35357\n\

admin_tenant_name = service\n\

admin_user = glance\n\

admin_password = $SERVICE_PWD" /etc/glance/glance-registry.conf

sed -i "/\[paste_deploy\]/a \

flavor = keystone" /etc/glance/glance-registry.conf

#start glance

su -s /bin/sh -c "glance-manage db_sync" glance

systemctl enable openstack-glance-api.service openstack-glance-registry.service

systemctl start openstack-glance-api.service openstack-glance-registry.service

#create the keystone entries for nova

keystone user-create --name nova --pass $SERVICE_PWD

keystone user-role-add --user nova --tenant service --role admin

keystone service-create --name nova --type compute \

--description "OpenStack Compute"

keystone endpoint-create \

--service-id $(keystone service-list | awk '/ compute / {print $2}') \

--publicurl http://$CONTROLLER_IP:8774/v2/%\(tenant_id\)s \

--internalurl http://$CONTROLLER_IP:8774/v2/%\(tenant_id\)s \

--adminurl http://$CONTROLLER_IP:8774/v2/%\(tenant_id\)s \

--region regionOne

#install the nova controller components

yum -y install openstack-nova-api openstack-nova-cert openstack-nova-conductor \

openstack-nova-console openstack-nova-novncproxy openstack-nova-scheduler \

python-novaclient

#edit /etc/nova/nova.conf

sed -i.bak "/\[database\]/a \

connection = mysql://nova:$SERVICE_PWD@$CONTROLLER_IP/nova" /etc/nova/nova.conf

sed -i "/\[DEFAULT\]/a \

rpc_backend = rabbit\n\

rabbit_host = $CONTROLLER_IP\n\

auth_strategy = keystone\n\

my_ip = $CONTROLLER_IP\n\

vncserver_listen = $CONTROLLER_IP\n\

vncserver_proxyclient_address = $CONTROLLER_IP\n\

network_api_class = nova.network.neutronv2.api.API\n\

security_group_api = neutron\n\

linuxnet_interface_driver = nova.network.linux_net.LinuxOVSInterfaceDriver\n\

firewall_driver = nova.virt.firewall.NoopFirewallDriver" /etc/nova/nova.conf

sed -i "/\[keystone_authtoken\]/i \

[database]\nconnection = mysql://nova:$SERVICE_PWD@$CONTROLLER_IP/nova" /etc/nova/nova.conf

sed -i "/\[keystone_authtoken\]/a \

auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\

identity_uri = http://$CONTROLLER_IP:35357\n\

admin_tenant_name = service\n\

admin_user = nova\n\

admin_password = $SERVICE_PWD" /etc/nova/nova.conf

sed -i "/\[glance\]/a host = $CONTROLLER_IP" /etc/nova/nova.conf

sed -i "/\[neutron\]/a \

url = http://$CONTROLLER_IP:9696\n\

auth_strategy = keystone\n\

admin_auth_url = http://$CONTROLLER_IP:35357/v2.0\n\

admin_tenant_name = service\n\

admin_username = neutron\n\

admin_password = $SERVICE_PWD\n\

service_metadata_proxy = True\n\

metadata_proxy_shared_secret = $META_PWD" /etc/nova/nova.conf

#start nova

su -s /bin/sh -c "nova-manage db sync" nova

systemctl enable openstack-nova-api.service openstack-nova-cert.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

systemctl start openstack-nova-api.service openstack-nova-cert.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

#create keystone entries for neutron

keystone user-create --name neutron --pass $SERVICE_PWD

keystone user-role-add --user neutron --tenant service --role admin

keystone service-create --name neutron --type network \

--description "OpenStack Networking"

keystone endpoint-create \

--service-id $(keystone service-list | awk '/ network / {print $2}') \

--publicurl http://$CONTROLLER_IP:9696 \

--internalurl http://$CONTROLLER_IP:9696 \

--adminurl http://$CONTROLLER_IP:9696 \

--region regionOne

#install neutron

yum -y install openstack-neutron openstack-neutron-ml2 python-neutronclient which

#edit /etc/neutron/neutron.conf

sed -i.bak "/\[database\]/a \

connection = mysql://neutron:$SERVICE_PWD@$CONTROLLER_IP/neutron" /etc/neutron/neutron.conf

SERVICE_TENANT_ID=$(keystone tenant-list | awk '/ service / {print $2}')

sed -i '0,/\[DEFAULT\]/s//\[DEFAULT\]\

rpc_backend = rabbit\

rabbit_host = '"$CONTROLLER_IP"'\

auth_strategy = keystone\

core_plugin = ml2\

service_plugins = router\

allow_overlapping_ips = True\

notify_nova_on_port_status_changes = True\

notify_nova_on_port_data_changes = True\

nova_url = http:\/\/'"$CONTROLLER_IP"':8774\/v2\

nova_admin_auth_url = http:\/\/'"$CONTROLLER_IP"':35357\/v2.0\

nova_region_name = regionOne\

nova_admin_username = nova\

nova_admin_tenant_id = '"$SERVICE_TENANT_ID"'\

nova_admin_password = '"$SERVICE_PWD"'/' /etc/neutron/neutron.conf

sed -i "/\[keystone_authtoken\]/a \

auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\

identity_uri = http://$CONTROLLER_IP:35357\n\

admin_tenant_name = service\n\

admin_user = neutron\n\

admin_password = $SERVICE_PWD" /etc/neutron/neutron.conf

#edit /etc/neutron/plugins/ml2/ml2_conf.ini

sed -i "/\[ml2\]/a \

type_drivers = flat,gre\n\

tenant_network_types = gre\n\

mechanism_drivers = openvswitch" /etc/neutron/plugins/ml2/ml2_conf.ini

sed -i "/\[ml2_type_gre\]/a \

tunnel_id_ranges = 1:1000" /etc/neutron/plugins/ml2/ml2_conf.ini

sed -i "/\[securitygroup\]/a \

enable_security_group = True\n\

enable_ipset = True\n\

firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver" /etc/neutron/plugins/ml2/ml2_conf.ini

#start neutron

ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf \

--config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade juno" neutron

systemctl restart openstack-nova-api.service openstack-nova-scheduler.service \

openstack-nova-conductor.service

systemctl enable neutron-server.service

systemctl start neutron-server.service

#install dashboard

yum -y install openstack-dashboard httpd mod_wsgi memcached python-memcached

#edit /etc/openstack-dashboard/local_settings

sed -i.bak "s/ALLOWED_HOSTS = \['horizon.example.com', 'localhost'\]/ALLOWED_HOSTS = ['*']/" /etc/openstack-dashboard/local_settings

sed -i 's/OPENSTACK_HOST = "127.0.0.1"/OPENSTACK_HOST = "'"$CONTROLLER_IP"'"/' /etc/openstack-dashboard/local_settings

#start dashboard

setsebool -P httpd_can_network_connect on

chown -R apache:apache /usr/share/openstack-dashboard/static

systemctl enable httpd.service memcached.service

systemctl start httpd.service memcached.service

#create keystone entries for cinder

keystone user-create --name cinder --pass $SERVICE_PWD

keystone user-role-add --user cinder --tenant service --role admin

keystone service-create --name cinder --type volume \

--description "OpenStack Block Storage"

keystone service-create --name cinderv2 --type volumev2 \

--description "OpenStack Block Storage"

keystone endpoint-create \

--service-id $(keystone service-list | awk '/ volume / {print $2}') \

--publicurl http://$CONTROLLER_IP:8776/v1/%\(tenant_id\)s \

--internalurl http://$CONTROLLER_IP:8776/v1/%\(tenant_id\)s \

--adminurl http://$CONTROLLER_IP:8776/v1/%\(tenant_id\)s \

--region regionOne

keystone endpoint-create \

--service-id $(keystone service-list | awk '/ volumev2 / {print $2}') \

--publicurl http://$CONTROLLER_IP:8776/v2/%\(tenant_id\)s \

--internalurl http://$CONTROLLER_IP:8776/v2/%\(tenant_id\)s \

--adminurl http://$CONTROLLER_IP:8776/v2/%\(tenant_id\)s \

--region regionOne

#install cinder controller

yum -y install openstack-cinder python-cinderclient python-oslo-db

#edit /etc/cinder/cinder.conf

sed -i.bak "/\[database\]/a connection = mysql://cinder:$SERVICE_PWD@$CONTROLLER_IP/cinder" /etc/cinder/cinder.conf

sed -i "0,/\[DEFAULT\]/a \

rpc_backend = rabbit\n\

rabbit_host = $CONTROLLER_IP\n\

auth_strategy = keystone\n\

my_ip = $CONTROLLER_IP" /etc/cinder/cinder.conf

sed -i "/\[keystone_authtoken\]/a \

auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\

identity_uri = http://$CONTROLLER_IP:35357\n\

admin_tenant_name = service\n\

admin_user = cinder\n\

admin_password = $SERVICE_PWD" /etc/cinder/cinder.conf

#start cinder controller

su -s /bin/sh -c "cinder-manage db sync" cinder

systemctl enable openstack-cinder-api.service openstack-cinder-scheduler.service

systemctl start openstack-cinder-api.service openstack-cinder-scheduler.service

② 스크립트로 설치를 마친 후 서비스 실행 상태 점검

[root@juno-controller ~]# for service in mariadb.service.service rabbitmq-server.service openstack-keystone.service openstack-glance-api.service openstack-glance-registry.service openstack-nova-api.service openstack-nova-cert.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service neutron-server.service httpd.service memcached.service openstack-cinder-api.service openstack-cinder-scheduler.service ; do systemctl list-unit-files | grep ${service} ; done

rabbitmq-server.service enabled

openstack-keystone.service enabled

openstack-glance-api.service enabled

openstack-glance-registry.service enabled

openstack-nova-api.service enabled

openstack-nova-cert.service enabled

openstack-nova-consoleauth.service enabled

openstack-nova-scheduler.service enabled

openstack-nova-conductor.service enabled

openstack-nova-novncproxy.service enabled

neutron-server.service enabled

httpd.service enabled

memcached.service enabled

openstack-cinder-api.service enabled

openstack-cinder-scheduler.service enabled

[root@juno-controller ~]# openstack-status

== Nova services ==

openstack-nova-api: active

openstack-nova-cert: active

openstack-nova-compute: inactive (disabled on boot)

openstack-nova-network: inactive (disabled on boot)

openstack-nova-scheduler: active

openstack-nova-conductor: active

== Glance services ==

openstack-glance-api: active

openstack-glance-registry: active

== Keystone service ==

openstack-keystone: active

== Horizon service ==

openstack-dashboard: active

== neutron services ==

neutron-server: active

neutron-dhcp-agent: inactive (disabled on boot)

neutron-l3-agent: inactive (disabled on boot)

neutron-metadata-agent: inactive (disabled on boot)

neutron-lbaas-agent: inactive (disabled on boot)

== Cinder services ==

openstack-cinder-api: active

openstack-cinder-scheduler: active

openstack-cinder-volume: inactive (disabled on boot)

openstack-cinder-backup: inactive (disabled on boot)

== Support services ==

mysqld: inactive (disabled on boot)

dbus: active

rabbitmq-server: active

memcached: active

== Keystone users ==

Warning keystonerc not sourced

[root@juno-controller ~]#

③ 오픈스택으로 동작시킬 가상머신을 얻는 가장 손쉬운 방법은 누군가 이미 만든 것을 다운로드하는 것이다.

CirrOS는 OpenStack Compute와 같은 클라우드에서 테스트 이미지용으로 사용하기 위해 디자인된 소형 Linux 배포판이다. 여러 포맷의 CirrOS 이미지를 CirrOS 다운로드 페이지에서 다운로드할 수 있다.

[root@juno-controller ~]# yum -y install wget

[root@juno-controller ~]# wget http://download.cirros-cloud.net/0.3.4/cirros-0.3.4-x86_64-disk.img

[root@juno-controller ~]# source admin-openrc.sh

[root@juno-controller ~]# glance image-create --name "cirros-0.3.4-x86_64" --file cirros-0.3.4-x86_64-disk.img --disk-format qcow2 --container-format bare --is-public True --progress

이미지에 대한 더 자세한 정보는 오픈스택 공식 홈페이지를 참고한다.

http://docs.openstack.org/image-guide/content/ch_obtaining_images.html

2. Network 노드 설치

① 아래 내용의 스크립트를 실행

install_network_node.sh

#!/bin/bash #get the configuration info CONTROLLER_IP='10.0.0.11' SERVICE_PWD='P@ssworD' ADMIN_PWD='P@ssworD' META_PWD='P@ssworD' THISHOST_IP='10.0.0.21' THISHOST_TUNNEL_IP='10.0.1.21' #openstack repos yum -y install yum-plugin-priorities yum -y install epel-release yum -y install http://rdo.fedorapeople.org/openstack-juno/rdo-release-juno.rpm yum -y upgrade yum -y install openstack-selinux #loosen things up systemctl stop firewalld.service systemctl disable firewalld.service sed -i 's/enforcing/disabled/g' /etc/selinux/config echo 0 > /sys/fs/selinux/enforce #get primary NIC info for i in $(ls /sys/class/net); do if [ "$(cat /sys/class/net/$i/ifindex)" == '3' ]; then NIC=$i MY_MAC=$(cat /sys/class/net/$i/address) echo "$i ($MY_MAC)" fi done echo "export OS_TENANT_NAME=admin" > admin-openrc.sh echo "export OS_USERNAME=admin" >> admin-openrc.sh echo "export OS_PASSWORD=$ADMIN_PWD" >> admin-openrc.sh echo "export OS_AUTH_URL=http://$CONTROLLER_IP:35357/v2.0" >> admin-openrc.sh source admin-openrc.sh echo 'net.ipv4.ip_forward=1' >> /etc/sysctl.conf echo 'net.ipv4.conf.all.rp_filter=0' >> /etc/sysctl.conf echo 'net.ipv4.conf.default.rp_filter=0' >> /etc/sysctl.conf sysctl -p #install neutron yum -y install openstack-neutron openstack-neutron-ml2 openstack-neutron-openvswitch sed -i '0,/\[DEFAULT\]/s//\[DEFAULT\]\ rpc_backend = rabbit\ rabbit_host = '"$CONTROLLER_IP"'\ auth_strategy = keystone\ core_plugin = ml2\ service_plugins = router\ allow_overlapping_ips = True/' /etc/neutron/neutron.conf sed -i "/\[keystone_authtoken\]/a \ auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\ identity_uri = http://$CONTROLLER_IP:35357\n\ admin_tenant_name = service\n\ admin_user = neutron\n\ admin_password = $SERVICE_PWD" /etc/neutron/neutron.conf #edit /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[ml2\]/a \ type_drivers = flat,gre\n\ tenant_network_types = gre\n\ mechanism_drivers = openvswitch" /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[ml2_type_flat\]/a \ flat_networks = external" /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[ml2_type_gre\]/a \ tunnel_id_ranges = 1:1000" /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[securitygroup\]/a \ enable_security_group = True\n\ enable_ipset = True\n\ firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver\n\ [ovs]\n\ local_ip = $THISHOST_TUNNEL_IP\n\ enable_tunneling = True\n\ bridge_mappings = external:br-ex\n\ [agent]\n\ tunnel_types = gre" /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[DEFAULT\]/a \ interface_driver = neutron.agent.linux.interface.OVSInterfaceDriver\n\ use_namespaces = True\n\ external_network_bridge = br-ex" /etc/neutron/l3_agent.ini sed -i "/\[DEFAULT\]/a \ interface_driver = neutron.agent.linux.interface.OVSInterfaceDriver\n\ dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq\n\ use_namespaces = True" /etc/neutron/dhcp_agent.ini sed -i "s/auth_url/#auth_url/g" /etc/neutron/metadata_agent.ini sed -i "s/auth_region/#auth_region/g" /etc/neutron/metadata_agent.ini sed -i "s/admin_tenant_name/#admin_tenant_name/g" /etc/neutron/metadata_agent.ini sed -i "s/admin_user/#admin_user/g" /etc/neutron/metadata_agent.ini sed -i "s/admin_password/#admin_password/g" /etc/neutron/metadata_agent.ini sed -i "/\[DEFAULT\]/a \ auth_url = http://$CONTROLLER_IP:5000/v2.0\n\ auth_region = regionOne\n\ admin_tenant_name = service\n\ admin_user = neutron\n\ admin_password = $SERVICE_PWD\n\ nova_metadata_ip = $CONTROLLER_IP\n\ metadata_proxy_shared_secret = $META_PWD" /etc/neutron/metadata_agent.ini systemctl enable openvswitch.service systemctl start openvswitch.service ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini cp /usr/lib/systemd/system/neutron-openvswitch-agent.service \ /usr/lib/systemd/system/neutron-openvswitch-agent.service.orig sed -i 's,plugins/openvswitch/ovs_neutron_plugin.ini,plugin.ini,g' \ /usr/lib/systemd/system/neutron-openvswitch-agent.service systemctl enable neutron-openvswitch-agent.service neutron-l3-agent.service \ neutron-dhcp-agent.service neutron-metadata-agent.service \ neutron-ovs-cleanup.service systemctl start neutron-openvswitch-agent.service neutron-l3-agent.service \ neutron-dhcp-agent.service neutron-metadata-agent.service

② 스크립트로 설치를 마친 후 서비스 실행 상태 점검

[root@juno-network ~]# for service in openvswitch.service neutron-openvswitch-agent.service neutron-l3-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service ; do systemctl list-unit-files | grep ${service} ; done

openvswitch.service enabled

neutron-openvswitch-agent.service enabled

neutron-l3-agent.service enabled

neutron-dhcp-agent.service enabled

neutron-metadata-agent.service enabled

[root@juno-network ~]# openstack-status

== neutron services ==

neutron-server: inactive (disabled on boot)

neutron-dhcp-agent: active

neutron-l3-agent: active

neutron-metadata-agent: active

neutron-lbaas-agent: inactive (disabled on boot)

neutron-openvswitch-agent: active

== Support services ==

openvswitch: active

dbus: active

[root@juno-network ~]#

③ 네트워크 작동 상태 점검

[root@juno-network ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN s

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:45:ff:83 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.21/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe45:ff83/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:45:ff:8d brd ff:ff:ff:ff:ff:ff

inet 10.0.1.21/24 brd 10.0.1.255 scope global eth1

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe45:ff8d/64 scope link

valid_lft forever preferred_lft forever

4: eth2: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master ovs-system state UP qlen 1000

link/ether 00:0c:29:45:ff:97 brd ff:ff:ff:ff:ff:ff

inet6 fe80::20c:29ff:fe45:ff97/64 scope link

valid_lft forever preferred_lft forever

5: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 56:c9:1d:24:7e:9a brd ff:ff:ff:ff:ff:ff

6: br-ex: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 00:0c:29:45:ff:97 brd ff:ff:ff:ff:ff:ff

7: br-int: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 36:49:4e:19:dd:49 brd ff:ff:ff:ff:ff:ff

8: br-tun: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 82:c1:23:d0:b5:42 brd ff:ff:ff:ff:ff:ff

[root@juno-network ~]#

④ 네트워크 노드 설치 스크립트 실행을 마친 후, ifcfg-br-ex, ifcfg-eth2 파일을 직접 생성/수정하는 방법을 사용하여 external 네트워크 인터페이스를 OpenvSwitch Bridge 타입으로 바꾸는 설정을 한다. 아래 URL을 참고한다.

Neutron with existing external network

https://www.rdoproject.org/networking/neutron-with-existing-external-network/

/etc/sysconfig/network-scripts/ifcfg-br-ex

DEVICE=br-ex DEVICETYPE=ovs TYPE=OVSBridge BOOTPROTO=static IPADDR=172.32.0.21 NETMASK=255.255.255.0 GATEWAY=172.32.0.2 DNS1=168.126.63.1 ONBOOT=yes

/etc/sysconfig/network-scripts/ifcfg-eth2

DEVICE=eth2 HWADDR=00:0c:29:45:ff:97 TYPE=OVSPort DEVICETYPE=ovs OVS_BRIDGE=br-ex ONBOOT=yes

[root@juno-network ~]# systemctl restart network

3, Compute 노드 설치

① 아래 내용의 스크립트를 실행

install_compute_node.sh

#!/bin/bash #get the configuration info CONTROLLER_IP='10.0.0.11' SERVICE_PWD='P@ssworD' ADMIN_PWD='P@ssworD' THISHOST_IP='10.0.0.31' THISHOST_TUNNEL_IP='10.0.1.31' #openstack repos yum -y install yum-plugin-priorities yum -y install epel-release yum -y install http://rdo.fedorapeople.org/openstack-juno/rdo-release-juno.rpm yum -y upgrade yum -y install openstack-selinux #loosen things up systemctl stop firewalld.service systemctl disable firewalld.service sed -i 's/enforcing/disabled/g' /etc/selinux/config echo 0 > /sys/fs/selinux/enforce echo 'net.ipv4.conf.all.rp_filter=0' >> /etc/sysctl.conf echo 'net.ipv4.conf.default.rp_filter=0' >> /etc/sysctl.conf sysctl -p #get primary NIC info for i in $(ls /sys/class/net); do if [ "$(cat /sys/class/net/$i/ifindex)" == '3' ]; then NIC=$i MY_MAC=$(cat /sys/class/net/$i/address) echo "$i ($MY_MAC)" fi done #nova compute yum -y install openstack-nova-compute sysfsutils libvirt-daemon-config-nwfilter sed -i.bak "/\[DEFAULT\]/a \ rpc_backend = rabbit\n\ rabbit_host = $CONTROLLER_IP\n\ auth_strategy = keystone\n\ my_ip = $THISHOST_IP\n\ vnc_enabled = True\n\ vncserver_listen = 0.0.0.0\n\ vncserver_proxyclient_address = $THISHOST_IP\n\ novncproxy_base_url = http://$CONTROLLER_IP:6080/vnc_auto.html\n\ network_api_class = nova.network.neutronv2.api.API\n\ security_group_api = neutron\n\ linuxnet_interface_driver = nova.network.linux_net.LinuxOVSInterfaceDriver\n\ firewall_driver = nova.virt.firewall.NoopFirewallDriver" /etc/nova/nova.conf sed -i "/\[keystone_authtoken\]/a \ auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\ identity_uri = http://$CONTROLLER_IP:35357\n\ admin_tenant_name = service\n\ admin_user = nova\n\ admin_password = $SERVICE_PWD" /etc/nova/nova.conf sed -i "/\[glance\]/a host = $CONTROLLER_IP" /etc/nova/nova.conf #if compute node is virtual - change virt_type to qemu if [ $(egrep -c '(vmx|svm)' /proc/cpuinfo) == "0" ]; then sed -i '/\[libvirt\]/a virt_type = qemu' /etc/nova/nova.conf fi #install neutron yum -y install openstack-neutron-ml2 openstack-neutron-openvswitch sed -i '0,/\[DEFAULT\]/s//\[DEFAULT\]\ rpc_backend = rabbit\n\ rabbit_host = '"$CONTROLLER_IP"'\ auth_strategy = keystone\ core_plugin = ml2\ service_plugins = router\ allow_overlapping_ips = True/' /etc/neutron/neutron.conf sed -i "/\[keystone_authtoken\]/a \ auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\ identity_uri = http://$CONTROLLER_IP:35357\n\ admin_tenant_name = service\n\ admin_user = neutron\n\ admin_password = $SERVICE_PWD" /etc/neutron/neutron.conf #edit /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[ml2\]/a \ type_drivers = flat,gre\n\ tenant_network_types = gre\n\ mechanism_drivers = openvswitch" /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[ml2_type_gre\]/a \ tunnel_id_ranges = 1:1000" /etc/neutron/plugins/ml2/ml2_conf.ini sed -i "/\[securitygroup\]/a \ enable_security_group = True\n\ enable_ipset = True\n\ firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver\n\ [ovs]\n\ local_ip = $THISHOST_TUNNEL_IP\n\ enable_tunneling = True\n\ [agent]\n\ tunnel_types = gre" /etc/neutron/plugins/ml2/ml2_conf.ini systemctl enable openvswitch.service systemctl start openvswitch.service sed -i "/\[neutron\]/a \ url = http://$CONTROLLER_IP:9696\n\ auth_strategy = keystone\n\ admin_auth_url = http://$CONTROLLER_IP:35357/v2.0\n\ admin_tenant_name = service\n\ admin_username = neutron\n\ admin_password = $SERVICE_PWD" /etc/nova/nova.conf ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini cp /usr/lib/systemd/system/neutron-openvswitch-agent.service \ /usr/lib/systemd/system/neutron-openvswitch-agent.service.orig sed -i 's,plugins/openvswitch/ovs_neutron_plugin.ini,plugin.ini,g' \ /usr/lib/systemd/system/neutron-openvswitch-agent.service systemctl enable libvirtd.service openstack-nova-compute.service systemctl start libvirtd.service systemctl start openstack-nova-compute.service systemctl enable neutron-openvswitch-agent.service systemctl start neutron-openvswitch-agent.service yum -y install openstack-cinder targetcli python-oslo-db MySQL-python sed -i.bak "/\[database\]/a connection = mysql://cinder:$SERVICE_PWD@$CONTROLLER_IP/cinder" /etc/cinder/cinder.conf sed -i '0,/\[DEFAULT\]/s//\[DEFAULT\]\ rpc_backend = rabbit\ rabbit_host = '"$CONTROLLER_IP"'\ auth_strategy = keystone\ my_ip = '"$THISHOST_IP"'\10.0.0.6 iscsi_helper = lioadm/' /etc/cinder/cinder.conf sed -i "/\[keystone_authtoken\]/a \ auth_uri = http://$CONTROLLER_IP:5000/v2.0\n\ identity_uri = http://$CONTROLLER_IP:35357\n\ admin_tenant_name = service\n\ admin_user = cinder\n\ admin_password = $SERVICE_PWD" /etc/cinder/cinder.conf systemctl enable openstack-cinder-volume.service target.service systemctl start openstack-cinder-volume.service target.service echo "export OS_TENANT_NAME=admin" > admin-openrc.sh echo "export OS_USERNAME=admin" >> admin-openrc.sh echo "export OS_PASSWORD=$ADMIN_PWD" >> admin-openrc.sh echo "export OS_AUTH_URL=http://$CONTROLLER_IP:35357/v2.0" >> admin-openrc.sh source admin-openrc.sh※ Block Storage Node를 구성한다면 block device 부착과 install_compute_node.sh 의 128 ~ 145행에 해당하는 코드 실행을 오직 Block Storage Node에만 적용한다.

② 스크립트로 설치를 마친 후 서비스 실행 상태 점검

[root@juno-compute ~]# for service in openvswitch.service libvirtd.service openstack-nova-compute.service neutron-openvswitch-agent.service openstack-cinder-volume.service ; do systemctl list-unit-files | grep ${service} ; done

openvswitch.service enabled

libvirtd.service enabled

openstack-nova-compute.service enabled

neutron-openvswitch-agent.service enabled

openstack-cinder-volume.service enabled

[root@juno-compute ~]# openstack-status

== Nova services ==

openstack-nova-api: inactive (disabled on boot)

openstack-nova-compute: active

openstack-nova-network: inactive (disabled on boot)

openstack-nova-scheduler: inactive (disabled on boot)

== neutron services ==

neutron-server: inactive (disabled on boot)

neutron-dhcp-agent: inactive (disabled on boot)

neutron-l3-agent: inactive (disabled on boot)

neutron-metadata-agent: inactive (disabled on boot)

neutron-lbaas-agent: inactive (disabled on boot)

neutron-openvswitch-agent: active

== Cinder services ==

openstack-cinder-api: inactive (disabled on boot)

openstack-cinder-scheduler: inactive (disabled on boot)

openstack-cinder-volume: active

openstack-cinder-backup: inactive (disabled on boot)

== Support services ==

openvswitch: active

dbus: active

target: active

Warning novarc not sourced

[root@juno-compute ~]#

③ 네트워크 작동 상태 점검

[root@juno-compute ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:63:b2:ad brd ff:ff:ff:ff:ff:ff

inet 10.0.0.31/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe63:b2ad/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:63:b2:b7 brd ff:ff:ff:ff:ff:ff

inet 10.0.1.31/24 brd 10.0.1.255 scope global eth1

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe63:b2b7/64 scope link

valid_lft forever preferred_lft forever

4: ovs-system: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 4e:08:56:fb:62:ae brd ff:ff:ff:ff:ff:ff

5: br-int: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 4a:19:e9:ee:dd:46 brd ff:ff:ff:ff:ff:ff

6: br-tun: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN

link/ether 16:a1:38:c9:29:48 brd ff:ff:ff:ff:ff:ff

[root@juno-compute ~]# ovs-vsctl show

bab9d7a8-e763-407a-b1e4-d9286f83443f

Bridge br-tun

Port patch-int

Interface patch-int

type: patch

options: {peer=patch-tun}

Port "gre-0a000115"

Interface "gre-0a000115"

type: gre

options: {df_default="true", in_key=flow, local_ip="10.0.1.31", out_key=flow, remote_ip="10.0.1.21"}

Port br-tun

Interface br-tun

type: internal

Bridge br-int

fail_mode: secure

Port patch-tun

Interface patch-tun

type: patch

options: {peer=patch-int}

Port br-int

Interface br-int

type: internal

ovs_version: "2.1.3"

[root@juno-compute ~]#

5. 초기 네트워크 생성

초기 네트워크 생성 방법에 대한 자세한 설명은 오픈스택 공식 홈페이지를 참고한다.

http://docs.openstack.org/juno/install-guide/install/yum/content/neutron_initial-external-network.html

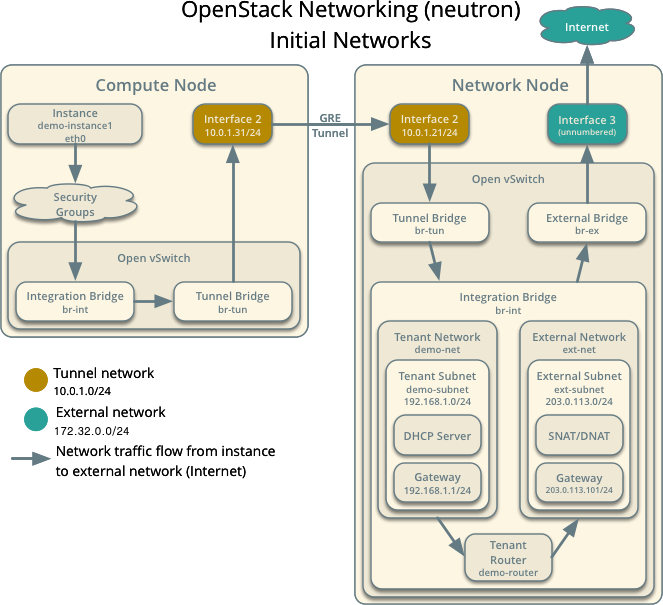

첫번째 인스턴스를 구동하기 전에, 인스턴스가 연결될 곳에 external network와 tenant network가 포함된 필수 virtual network 인프라를 생성해야 한다. 이 인프라를 생성한 후에, 이후의 과정을 진행하기 전에 연결상태를 검사하고 몇몇 이슈를 해결할 것을 권장한다.

아래의 그림은 네트워킹이 초기 네트워크을 위해 구현하는 컴포넌트의 기본 아키텍처 개요를 제공하며 인스턴스로부터 external network나 인터넷을 향한 네트워크 트래픽 흐름을 보여준다.

5.1. External network

external network는 일반적으로 인스턴스가 인터넷에 접속할 수 있도록 제공된다. 기본적으로, 이 네트워크는 Network Address Translation (NAT)를 사용하여 오직 인스턴스만이 인터넷에 접속하도록 허용한다. 유동 IP(floating IP) 주소와 적합한 보안 룰을 사용하여 사용자 인스턴스의 인터넷 접속을 가능하게 할 수 있다. admin tenant가 복수의 tenant에게 external network 접속을 제공하므로 admin tenant가 이 네트워크를 소유한다.

5.1.1. external 네트워크 생성하기

admin 전용의 CLI 커맨드 접속 획득을 위한 admin 자격증명서(credential)를 source하기:

$ source admin-openrc.sh

네트워크 생성:

$ neutron net-create ext-net --router:external True \

--provider:physical_network external --provider:network_type flat

물리 network와 같이 가상 network는 할당된 서브넷을 필요로 한다. external network는 같은 서브넷과 게이트웨이를 공유한다. 게이트웨이는 네트워크 노드의 external 인터페이스에 연결된 물리 네트워크와 결합된다. external 네트워크에 있는 다른 장치의 간섭을 막기 위해 라우터와 유동 IP를 위한 이 서브넷의 배타적 슬라이스를 지정해야 한다.

5.1.2. external network에서 서브넷 생성하기

서브넷 생성:

$ neutron subnet-create ext-net --name ext-subnet \

--allocation-pool start=FLOATING_IP_START,end=FLOATING_IP_END \

--disable-dhcp --gateway EXTERNAL_NETWORK_GATEWAY EXTERNAL_NETWORK_CIDR

FLOATING_IP_START 와 FLOATING_IP_END 는 유동 IP 주소로 할당하기 원하는 대역의 첫 IP와 마지막 IP로 바꾼다. EXTERNAL_NETWORK_CIDR 는 물리 네트워크에 결합된 서브넷으로 바꾼다.

EXTERNAL_NETWORK_GATEWAY 는 일반적으로 ".1" IP 주소를 갖는, 물리 네트워크에 결합된 게이트웨이로 바꾼다. 인스턴스가 external network에 직접 연결되면 안 되고 유동 IP 주소들은 수동 할당을 필요로 하므로 이 서브넷에서 DHCP를 비활성화해야 한다.

유동 IP 대역으로 172.32.0.100 ~ 172.32.0.199 를 갖는 172.32.0.0/24 의 예

$ neutron subnet-create ext-net --name ext-subnet \

--allocation-pool start=172.32.0.100,end=172.32.0.199 \

--disable-dhcp --gateway 172.32.0.2 172.32.0.0/24

5.2. tenant network

tenant network는 인스턴스 용도의 internal network를 제공한다. 아키텍처는 다른 tenant로부터 이러한 종류의 네트워크를 격리한다. 이 네트워크가 인스턴스를 위한 네트워크 액세스만을 제공하므로 'demo' tenant가 이 네트워크를 소유한다.

5.2.1. tenant 네트워크 생성하기

사용자 전용의 CLI 커맨드 접속 획득을 위한 admin 자격증명서(credential)를 source하기:

$ source demo-openrc.sh

network 생성:

$ neutron net-create demo-net

물리 network와 같이 tenant network는 할당된 서브넷을 필요로 한다. external network는 같은 서브넷과 게이트웨이를 공유한다. 아키텍처가 tenant network를 격리하므로 유효한 서브넷을 지정할 수 있다. 기본값으로 이 서브넷은 DHCP를 사용할 것이므로 인스턴스는 IP 주소를 얻을 수 있다.

5.2.2. tenant 네트워크에서 서브넷 생성하기

서브넷 생성:

$ neutron subnet-create demo-net --name demo-subnet \

--gateway TENANT_NETWORK_GATEWAY TENANT_NETWORK_CIDR

TENANT_NETWORK_CIDR는 tenant network에 결합시키기 원하는 서브넷으로 바꾸고 TENANT_NETWORK_GATEWAY는 일반적으로 ".1" IP 주소를 갖는, 그것(tenant network)에 결합시키기 원하는 게이트웨이로 바꾼다.가상 라우터는 둘 이상의 가상 네트워크 사이의 네트워크 트래픽을 통과시킨다. 각 라우터는 특정 네트워크로의 접근을 제공하는 하나 이상의 인터페이스 and/or 게이트웨이를 필요로 한다. 이 케이스에서, 라우터를 생성하고 tenant network와 external network를 라우터에 부착할 것이다.

5.3. tenant 네트워크에 라우터를 생성하고 라우터를 external 네트워크와 tenant 네트워크에 부착하기

라우터 생성:

$ neutron router-create demo-router

라우터를 데모용 tenant 서브넷(demo-subnet)에 부착:

$ neutron router-interface-add demo-router demo-subnet

라우터를 게이트웨이로써 세팅하여 external 네트워크(ext-net)에 부착:

$ neutron router-gateway-set demo-router ext-net

5.4. Verify connectivity

이후의 과정을 진행하기 전에 연결상태를 검사하고 몇몇 이슈를 해결할 것을 권장한다. 172.32.0.0/24를 사용하는 external network 서브넷의 예를 따르면, tenant 라우터 게이트웨이는 유동 IP 대역에서 가장 낮은 IP 주소인 172.32.0.100을 차지하여야 한다. 만약 external 물리 네트워크와 virtual 네트워크를 올바르게 구성했다면, external 물리 네트워크의 어떤 호스트로부터 이 IP 주소(172.32.0.100)로 ping이 가능해야 한다.

5.4.1. To verify network connectivity

tenant 라우터 게이트웨이로 ping

[root@juno-network ~]# ping -c 3 172.32.0.100

PING 172.32.0.100 (172.32.0.100) 56(84) bytes of data.

64 bytes from 172.32.0.100: icmp_seq=1 ttl=64 time=28.5 ms

64 bytes from 172.32.0.100: icmp_seq=2 ttl=64 time=0.122 ms

64 bytes from 172.32.0.100: icmp_seq=3 ttl=64 time=0.073 ms

--- 172.32.0.100 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2002ms

rtt min/avg/max/mdev = 0.073/9.596/28.593/13.432 ms

[root@juno-network ~]#